This is issue 2026.10 of the API Changelog, a mix of API news, commentary, and opinion. In this issue, you'll get to know the most relevant API-related information from the week of March 2, 2026. Subscribe now so you never miss an issue of the API Changelog.

This issue of the API Changelog is sponsored by Jentic:

Jentic’s AI‑readiness scorecard gives teams a fast, standards‑aligned view of how usable an API is for agents. It surfaces gaps in summaries, examples, error handling, and auth that block reliable automation, and prioritises the improvements that raise both developer experience and agent success. Use it to benchmark APIs, align teams on readiness, and keep control as AI systems become the dominant API consumers.

Last week, MWC Barcelona 2026 solidified a narrative we’ve been tracking for some time now: the transition from “API experimentation” to “scaled commercialization.” We are no longer just talking about connecting apps. Now we're talking about mobile networks becoming programmable platforms for autonomous agents. From OpenAI’s latest frontier model to a massive telecom expansion, the “nervous system” of the global economy is being rewired in real-time.

But let me stop being abstract and show you some of the things that came out last week.

The most significant shift in how we consume APIs comes from the providers themselves. OpenAI launched GPT-5.4, which moves beyond simple text-in/text-out patterns. By merging the reasoning of the 5.2 series with advanced “computer-use” capabilities, the API now allows agents to interpret screenshots and programmatically execute mouse commands. For developers, the new Tool Search feature is the real winner, reducing token overhead by 47% by allowing agents to dynamically retrieve only the necessary tool definitions from massive ecosystems.

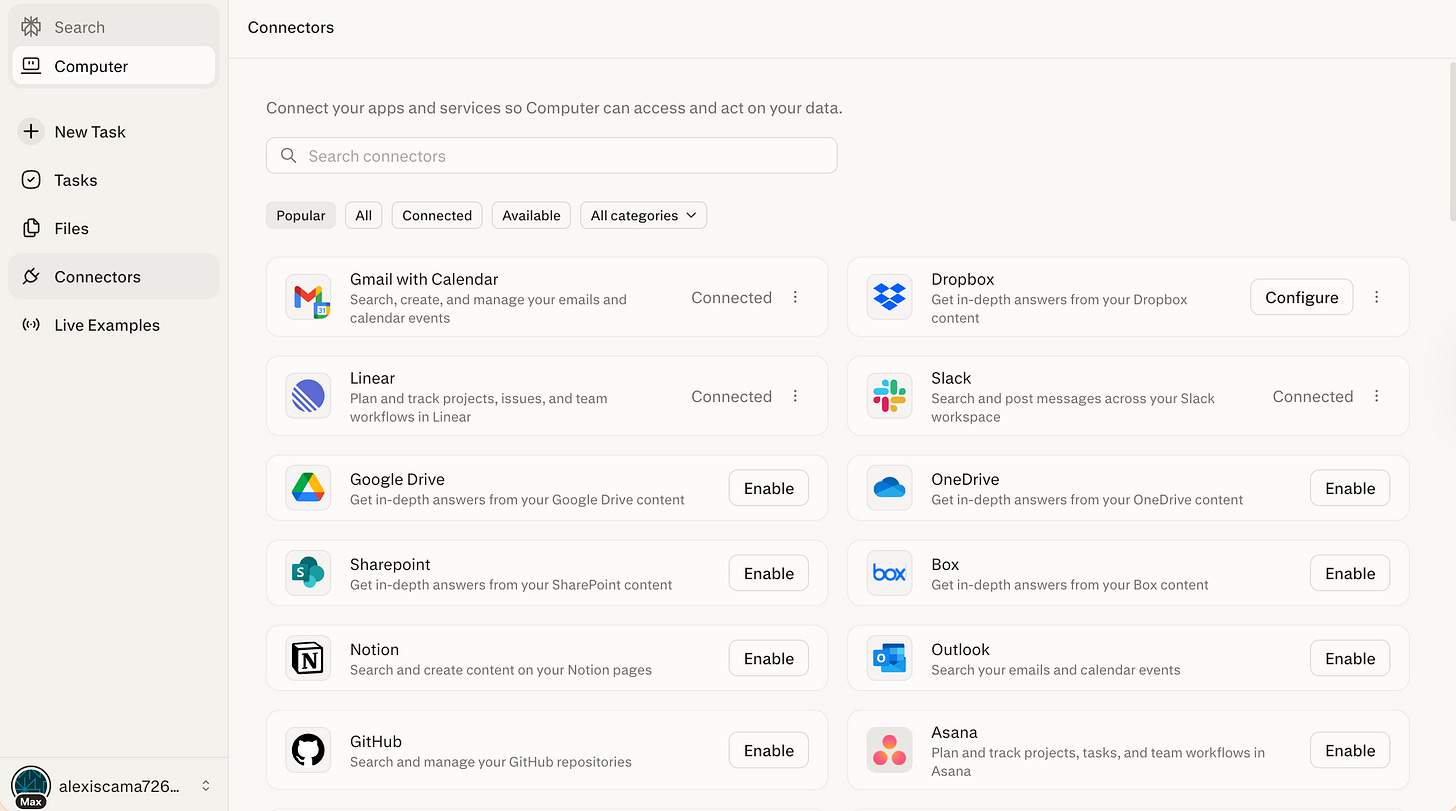

Meanwhile, Perplexity launched “Perplexity Computer,” a multi-model orchestration system. Instead of a single model, it uses a “swarm” of 19 specialized AI models—including Claude Opus 4.6 and Gemini—within a sandboxed environment. In terms of APIs, this represents the ultimate “digital worker,” programmatically calling endpoints for Gmail, GitHub, and Salesforce to deliver finished artifacts rather than just answers.

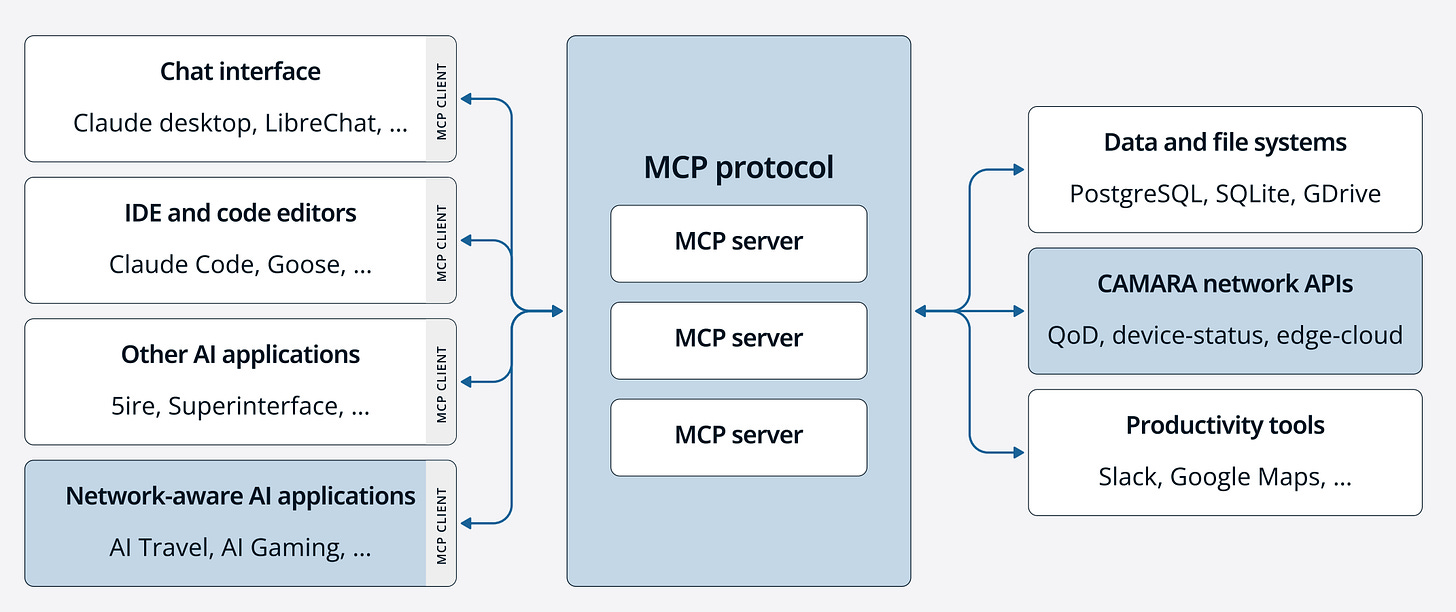

At MWC 2026, the GSMA Open Gateway initiative reached its commercial tipping point. Aduna Global, the Ericsson-led venture, announced a massive expansion, partnering with 15 major industry leaders. Partners include digital identity, fraud prevention, and communications providers like Google Cloud, Infobip, Sinch, and Vonage.

On top of that, Aduna is expanding its supply network to cover 40 mobile operators across key markets such as the USA, Germany, Spain, and Canada. Aduna is effectively becoming the “Stripe for Telco APIs,” with functions as a unified “global exchange” that aggregates standardized, CAMARA-based interfaces into a single access point, allowing developers to programmatically invoke network capabilities. By providing this plumbing at a global scale, Aduna is positioning the mobile network as a programmable engine for the agentic AI era, where autonomous systems can negotiate real-time connectivity requirements directly via these standardized API endpoints.

Additionally, Viettel Solutions signed a partnership with Aduna. By opening up its footprint across 10 countries via standardized APIs, Viettel is enabling third-party developers to programmatically verify identities and secure transactions globally, bypassing the need for fragmented, carrier-specific integrations. The partnership is designed to provide developers and enterprises with a simplified, consistent, and scalable model for accessing advanced mobile network capabilities via a single integration point. In terms of APIs, this collaboration centers on the GSMA Open Gateway initiative and the CAMARA open-source project.

It isn’t just the network that is becoming programmable. It’s also the business logic behind it.

Amdocs unveiled CES26, an agent-driven suite powered by their “aOS” (Agentic Operating System). This is a “TM Forum OpenAPI-first” architecture designed so that AI agents can “sense, decide, and act” across siloed domains like billing and network assurance without human intervention.

However, with great power comes extreme financial risk. We saw this play out in a devastating security breach for a Mexican startup. A stolen Gemini API key was used to rack up $82,314.44 in charges in just 48 hours. The incident highlights a critical flaw in the agentic era: legacy API keys, once used for low-cost map queries, are now high-speed conduits to expensive GPU compute. Without hard spending caps and real-time anomaly detection, an exposed key isn’t just a leak. It’s a bankruptcy event.

Even the oldest systems are being forced to adapt.

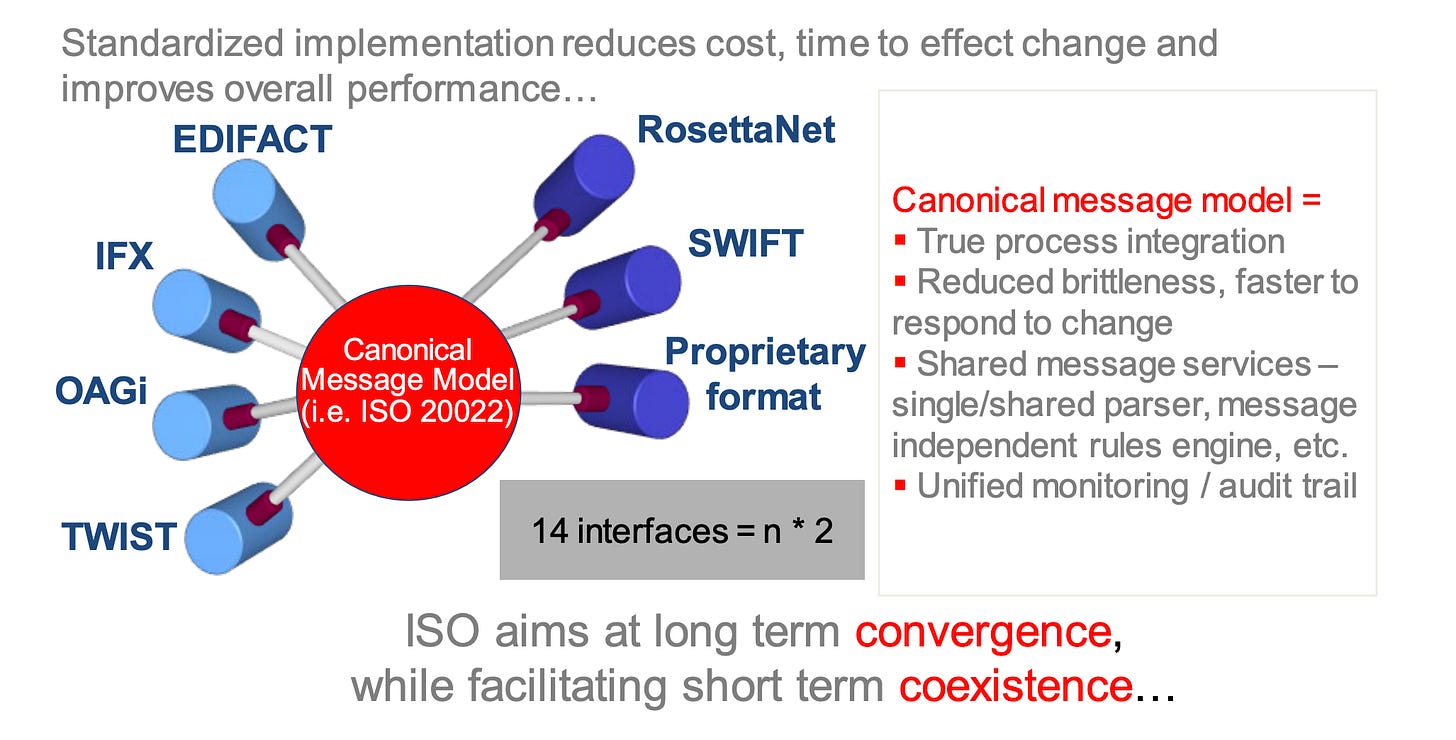

The ACH network is undergoing a mandatory modernization. New Nacha rules starting March 20, 2026, require financial institutions to move away from batch files toward real-time API-based risk monitoring. This replaces manual CSV uploads with API gateways and ISO 20022 messaging, allowing AI treasury agents to choose the optimal payment path between ACH and FedNow instantly.

We were reminded that even the best APIs are only as good as their uptime.

Anthropic’s Claude experienced a series of major global outages, notably on March 2nd and 3rd, primarily triggered by a massive surge in unprecedented demand as users migrated from rivals following high-profile political and military AI controversies. The disruption initially paralyzed the Claude.ai web interface and authentication pathways, causing widespread HTTP 500 and 529 errors. It quickly cascaded into the Claude API, breaking third-party integrations and enterprise workflows for several hours. The event has become a case study for enterprise AI resilience, prompting developers to implement multi-model failover strategies and exponential backoff logic to prevent automated pipelines from collapsing when a primary AI provider’s API gateway fails.

To help developers manage all this complexity, Postman unveiled at MWC Barcelona 2026 a new AI-native era. The centerpiece of this launch is Agent Mode, a sophisticated reasoning layer that utilizes deep context from API specifications, test results, and real production behavior to autonomously execute multi-step workflows like debugging failing endpoints or generating comprehensive test suites. In terms of APIs, the platform now treats them as the primary interface for agentic consumers, introducing native support for the Model Context Protocol (MCP) to help AI agents discover and interact with services safely.

Whether it’s OpenAI slashing latency, Aduna aggregating global networks, or Postman automating the dev-cycle, the theme seems to be the removal of friction. APIs have officially evolved from static documentation to the active nervous system of autonomous business logic.

As we move toward an era where agents are the primary API consumers, the gap between data and action is shrinking. Organizations that treat their APIs as programmable products will be the ones to lead this automated economy.

Until next week!